As I wrote about recently, I’ve started using Azure Batch to run OpenAPS Autotune jobs for AutotuneWeb. The other day however, I started a job of my own and got a notification that my job was 48th in the queue. Either the service has suddenly got really popular, or something’s gone wrong.

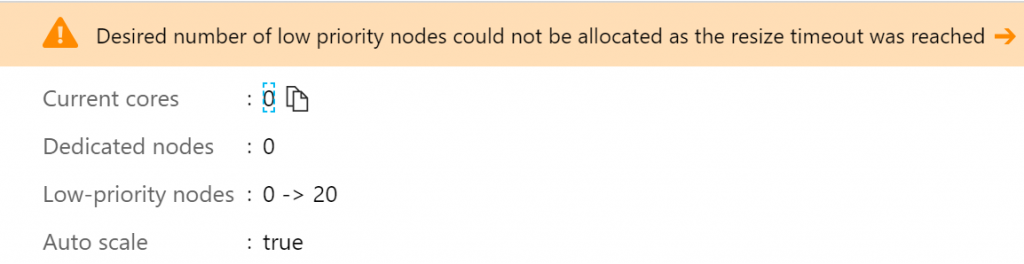

A quick look at my Azure Portal showed me that the Azure Batch pool was stuck trying to automatically scale up to 20 VMs, but it was getting a timeout error:

I initially wondered if it was a problem due to trying to go straight from 0 to 20 nodes, so I tried a few alternative scale formulas to just use a single dedicated node instead, but I still got the same problem.

I haven’t found a way to get detailed debugging information out at this point, but I wondered if there was some problem with the VM image I was using. The previous night I’d tidied up various unused resources from my previous implementations, so I thought I might have deleted something important.

Next I tried creating a single VM manually from the same image that the pool was using. That worked fine, so then I created a new image from that VM and created a new pool using the new image. The new pool using that image could scale just fine, so I tried creating a third pool using the original image. That one had the same problem, so there was definitely something up with the original VM image I was using.

To get things working, I moved all the pending jobs to use the pool with the new image while I tried to figure out what was up with the original one.

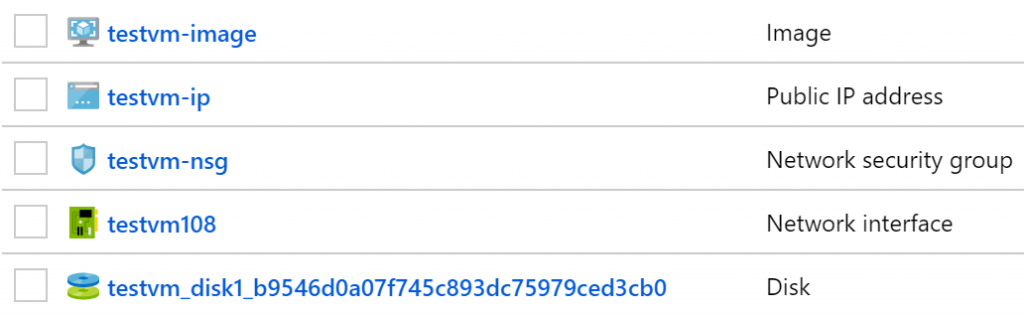

To figure it out without breaking the live system again, I created another new VM called testvm with the same image, then captured the image of it as testvm-image. With the VM now deleted there are still a bunch of resources left lying around, which are what I’d tried to tidy up the previous night:

Although the virtual machine itself has been deleted, there are still 4 resources left apparently unused:

- Public IP address

- Network security group

- Network interface

- Disk

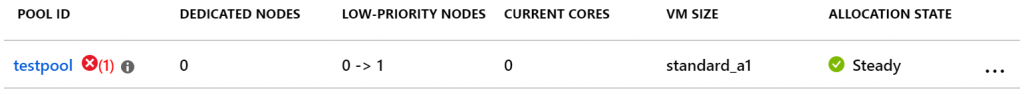

So in the best traditions of trial and error I created a new Azure Batch pool using the new image, then tried deleting each of these resources in turn and checking if the pool could still resize afterwards.

Deleting the public IP address resource first, because it was at the top of the list, gives an error because it is linked to the network interface resource. Makes sense, so I deleted the network interface instead. Everything still worked fine.

I then deleted first the public IP address, then the network security group. Both times, the pool still resized correctly, so surely it was the disk resource that would trigger the problem…

Yes! Now I deleted the disk resource from my imaged VM, I can no longer resize the pool.

Now I’m not sure quite what is causing the problem here. The image itself is still usable for creating VMs individually, apparently just not through Azure Batch. Presumably all the data required to create a VM is therefore wrapped up in the Image resource itself, but Azure Batch must have some additional dependency somewhere. I’d love to understand more if anyone can explain what’s going on here?